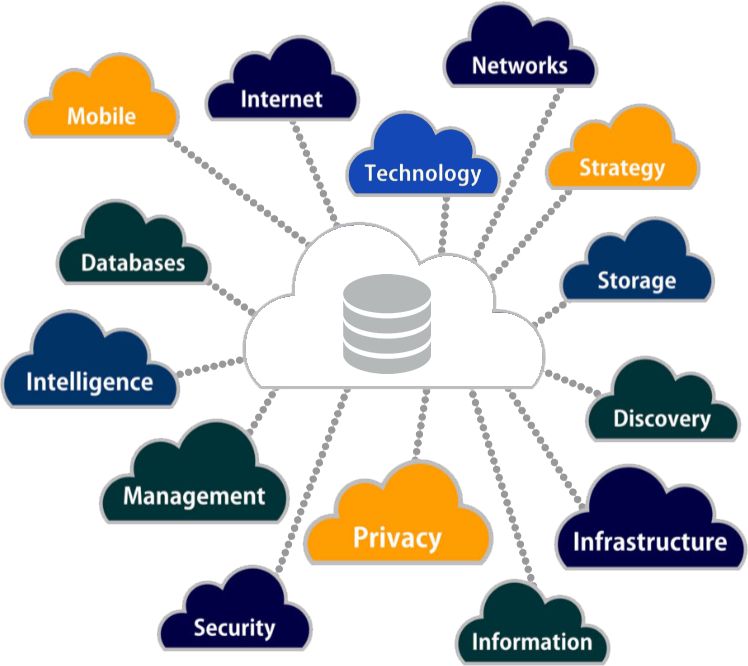

Big Data describes extremely large volumes of data (both structured and unstructured) that upon analysis can reveal patterns, associations, and trends in the data set. Big Data inundates business on a day-to-day basis. What enterprises do with this huge amount of data is far more important than the amount of data in itself. Big Data insights lead to better decision making and strategic business moves.

Big data is often looked upon as a problem by various companies as this data is difficult to process with traditional systems based on relational databases. On the contrary, Big Data provides tremendous opportunities to enrich and transform the way your business runs. Big data offers new models for pricing, innovations, new ways to engage with customers and partners, increase in operational efficiencies, the growth of new market opportunities, monitoring risk, and compliance standards.

Big Data is defined broadly through the 4 V’s. The four V’s of Big Data:

- Volume

The volume represents the actual amount of data available. The magnitude of this data is extremely high as it is collected over a period of years. As per a research, 90% of this data is collected over the last two years alone.

- Velocity

Velocity depicts the speed with which the industry is changing and taking actions on relevant data. Enterprises are overburdened with data which is historical and no more relevant. It is, therefore, important to analyze this lot of data and refine only the relevant data. This helps in deriving precise business decisions.

- Variety

Variety refers the types of different data sources and forms. These sources generally are Websites, Point-of-Sale systems, CRM and ERP systems, etc.

- Veracity

This stands for uncertainty about the available data. Due to diversified volume, variety, and velocity, the data refined needs to be accurate to enable any further analysis.

3 Major Reasons for Failure of Big Data Projects

Most organizations, today, want to spend hefty amounts on Big Data but the downside should not be ignored. According to a research, 92% of the organizations are still stuck in the thought of getting started on the Big Data projects. Why so? They fear to invest their hard earned money due to the high rate of failures experienced by others in the market. Well, let’s understand what are the possible reasons that cause Big Data projects to fail?

-

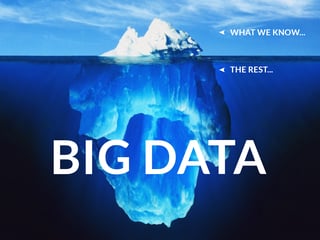

The way we think of Big Data is wrong:

The way Big Data gets treated is like it is a known beginning with a known end rather than an agile journey leading through constant exploration. Using Big Data you can derive patterns for tomorrow’s business success. However to get the answer you can take the exploration in mind but should not expect a defined deliverable out of it. Big Data is a constant research to gaining useful insights rather than deriving fixed conclusions soon. The true value of this data is discovered when it is put in business context else it's just a huge amount of data.

Another reason accounting to lack of proper research in Big Data projects is the unavailability of skilled data scientists. Although companies use agile solutions and tools like ETL, Hadoop, SAS, etc., these tools cannot fill the skill gap alone. The level of expertise and experience also plays its role in indulging in proper research in Big Data projects. More flexibility and a longer period of time are required for such experiments and to gain fruitful information out of Big Data.

-

A Huge hurdle in terms of ROI:

There needs to be more space for failures and learnings in the beginning. Until now, companies have been investing only a small portion of their money into maintaining and managing a part of Big Data. Most of the non-captured data are derived from surveys based on customer feedback, emails, social media, and distribution partners.

When companies faced the real volume, variety, and velocity of actual Big Data, they failed to perform. To add to it, enterprises couldn't cope up with the heavy amount to be invested in making their existing data setup in synch with these new challenges.

This resulted in organizations creating their very own versions of data marts leading to misinterpreted information.

-

Lack of Clarity:

The projects dealing with Big Data are not completely tied to one’s unique objectives. These projects are just thought of as scientific with no business goals or metrics. In order to gain the maximum benefit out of it, you need to point your Big Data to a specific need or problem of your business. In order to justify your investments for Big Data projects, you would require showcasing your results continuously. The demand is for business needs having rapid and agile data access. Businesses look for very low costs for data-driven discoveries.

If operated properly, Big data offers a wide range of possibilities to businesses today and in future. The problem lies in the lack of skilled professionals and failure in proper execution. It is only a matter of time when Big Data becomes an important part of business decision making. If these mistakes are kept at bay it will become a lot easier to execute any Big Data strategy. One more way to increase your chances of success is using the right tools for the right project. To know which tools to use for big data visualization check out this blog:

https://www.newgenapps.com/blog/10-big-data-visualization-tools

Markdown Optimization: How to Maximize Revenue with Precision Pricing

Retailers often face difficulties in optimizing their pricing for different seasons. In the peak season, they need to determine the opening price and...

How is Big Data impacting Internet Marketing

With every passing day, the data that is being generated for consumers is increasing multifold. Social networking databases and GPS tracking add to...