How To Tailor A Cloud Solution For Your Unique IT Infrastructure

Cloud solutions have taken over the market for businesses that need to expand their verticals. Most of the companies are preferring to opt for cloud...

5 min read

Anurag : Apr 13, 2020 6:06:07 PM

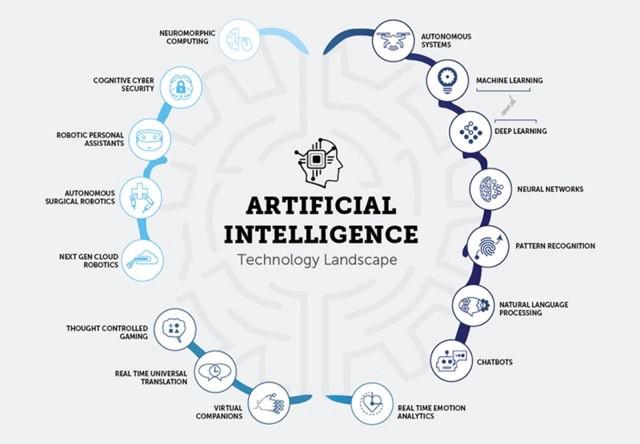

Individuals are buckling down each day to change the manner in which society survives innovation, and one of the most recent boondocks in the field of computer science is Artificial Intelligence.

Numerous companies are investigating the utilization of AI and Machine Learning to understand how to anchor their systems against cyber-attacks and malware. But given their tendency of self-learning, these AI frameworks have now also achieved a level where they can be trained to be a hazard to frameworks, i.e., go into all-out attack mode.

It is practically inevitable that we'll see a boost in utilization for AI in our day to day life. However, much like different advancements in the tech market, this additionally opens up an entirely different road of grown misapplication from the cyber attackers. There is the definite probability for a level of misuse that develops past what we've seen up until this point, mainly as the tech keeps moving forward.

Let’s discuss how AI is affecting the cybersecurity negatively.

To begin with, it's simply how similar advantages that cybersecurity specialists appreciate from the presentation of Artificial Intelligence are substantial for scammers and hackers too. Cybercriminals can utilize automation to impact the way toward finding new vulnerabilities which they can exploit quickly and easily.

Researchers and professionals are alerted about the danger AI technology models for cybersecurity, that generally critical practice that keeps our PCs and information — and companies' and governments' PCs and information — safe from cybercriminals.

According to a study by U.S. cybersecurity software titan Symantec - 978 million individuals around 20 nations were influenced by cybercrime in 2017. Researchers said victims of cyber-attacks lost an aggregate of $172 billion, i.e., an average of $142 per individual as a result.

The dread for several is that AI will carry with it the beginning of new types of digital threats that sidestep common methods for countering attacks.

For instance, machine learning can create attacks scripts at a pace and level of multifaceted nature that mostly can't be met by people. Here are the further threats to cyber-security through AI.

The researchers from Cambridge and Oxford predicted that AI could be practiced to hack self-driving vehicles and drones, creating the likelihood of intentional car crashes and rogue bombings.

For instance, Google’s Waymo autonomous cars apply deep learning, and that system could be outwitted into reading a stop sign as a green light which will be causing a fatal accident.

Further, as the complexity of AI and cybercriminals increases, so will the odds of the real-time attacks. For instance, a few hackers can integrate drones into a swarm that may be fixed with explosives to carry out terror attacks or assassinations. It is Artificial Intelligence technology that empowers cybercriminals to program these attacks more effectively and to connect the drones with basic knowledge.

We appreciate conversing with chatbots without acknowledging the amount of data we are transferring to them. Likewise, chatbots can be programmed to maintain talks with users in an approach to influence them into uncovering their financial or personal information, connections, etc.

In 2016, a Facebook bot spoke to itself as a friend and deceived 10,000 Facebook users into malware installation. Once the malware was imperiled, it seized the Facebook account of those users.

AI-empowered botnets can debilitate HR through phone and online gateways support. The vast majority of us utilizing AI conversational bots, for example, Amazon's Alexa or Google Assistant don't comprehend the amount of knowledge they have about us. Being an IoT driven technology, they can generally hear, even the private discussions occurring around them. Additionally, some chatbots are poorly prepared for secure information transmissions, for example, Transport Level Authentication (TLA) or HTTPS protocols can be effectively utilized by hackers.

Artificial Intelligence in security attacks will likewise make it less demanding for low-level cyber attackers to control complex interruptions by just computing with ease. Programmers regularly prevail by scaling their tasks. The more individuals they go after phishing plans or, the more systems they explore, the almost certain they are to get access. Artificial Intelligence furnishes them with an approach to scale to a lot higher level, through automation of the targets and delivering bulk attacks.

A fundamental precedent where cybercriminals are utilizing AI to dispatch an attack is through spear phishing. Artificial intelligence frameworks with the assistance of machine learning models can without much difficulty imitate people by creating swaying fake messages. Applying this technique, hackers can utilize them to perform more phishing attacks. Hackers can likewise utilize AI to make malware for deceiving programs or sandboxes that endeavor to find rogue code before it is sent in the system of an organization.

And AI-based phishing scams are just the inception. By utilizing machine learning, cyber-attackers could watch for possible vulnerabilities and automatize range of their possible victims. The similar technology could serve them in adequately analyzing the AI-based cyber defense frameworks and produce new kinds of malware that could slide through them.

What's more, AI can also certainly better customize the phishing plan by referencing all individual targets with that individual's social data and other online data, making each attack bound to be profitable. Mainly from online networking websites like Twitter where the content is characterized by progressively casual vocabulary and grammar syntax use.

Utilizing a blend of histograms, natural language processing, and the scraping of publicly accessible information attackers will probably make increasingly tenable looking malicious emails while additionally diminishing the required burden and expanding the speed with which they can direct such cyber-attacks. The synchronous increment in the quality with the decrease in time, effort, and resources, implies that spear phishing will stay as amongst the most immovable cybersecurity issues of today.

Organizations' AI activities present a variety of potential vulnerabilities, incorporating malicious corruption or training data, usage, and segment configuration. No industry is resistant, and there are numerous classifications in which AI and machine learning as of now have a responsibility and in this manner present expanded threats. For instance, credit card scam may become simple.

Also, security, environment, and health systems may be imperiled that control cyber-physical gadgets which oversee train routing, traffic flow, or dam overflow.

Other AI-based risks will include high-level social networking mapping. For instance, AI-powered tools that will look further into social networking platforms will empower terrorists to detect the right city and human targets and operate all the more successfully.

Thus, cybersecurity professionals and defense departments should work unitedly to recognize such dangers and create solutions.

For numerous, parts of our personal lives are as of now automated – with IoT-connected gadgets and virtual assistants. But this requires a lot of personal information live in the cloud. This intricate system of connections will make new dimensions of vulnerability, with cyber threats hitting a lot nearer to home — for instance, terrorists, rogue governments, and hackers could target Internet-linked medical gadget.

A long time from now, alarm systems and locks may not be sufficient to protect us at home. Rogue bots could persistently check systems, hunting down vulnerabilities. Along these lines, an alluring target is a helpless one. It implies anybody could turn into a potential target.

Fortunately, it's not all dreary, and when the appropriate safety measures are taken, these vulnerabilities can be restricted. The malicious application of Artificial Intelligence likewise laid out proactively how the potential malevolent utilization of AI and Machine Learning can be moderated.

The concept is that cybersecurity organizations and researchers should cooperate in surveying the dangers and distinguish practices that can encourage security even though when facing AI-driven attacks. For a future where AI can turn into a focal element of regular life, so must be our emphasis on cybersecurity.

At present, nonetheless, one likely early solution comes as endpoint protection. As a VPN can help bring down potential dangers for general internet users, endpoint protection enables organizations to forestall data breach and exploits. An endpoint protection system restrains the ways of access from a security risk through an administrator who has command over what kind of internal data and external sites a device or a server approach. It is a decent alternative for associations who are essentially depending on antivirus programming to deal with the majority of their cybersecurity inadequacies.

Furthermore, AI lets security groups estimate their tasks for observing digital systems and identifying digital breaches, issues, and incidents. It can even help by offering recommendations to security groups of procedures to deal with them.

Final words...

Human intelligence and Artificial Intelligence need to work together for the ideal outcomes. In the meantime, moves in deep learning tech – a level beyond machine learning – use methods that copy the working of the human mind to enable AI to analyze and contemplate better. Even though we are still at a beginning stage, yet AI will be a necessarily significant accomplice in the years to work in combating and defeating our cybercrime enemies.

Cloud solutions have taken over the market for businesses that need to expand their verticals. Most of the companies are preferring to opt for cloud...

It all started in the early nineties, now artificial intelligence has taken the world with a storm. The whole 21st century is spinning around it,...